Used by 19 of Fortune 50

10+ billion observations/month

100,000+ engineers building on Langfuse

Used by 19 of Fortune 50

10+ billion observations/month

100,000+ engineers building on Langfuse

Open Source LLMEngineeringPlatform

Debug AI Applications and Agents in minutes. Spot issues before your users do. Collaborate with your team to continuously improve on cost, latency and quality. Any model, any framework. Based on OpenTelemetry.

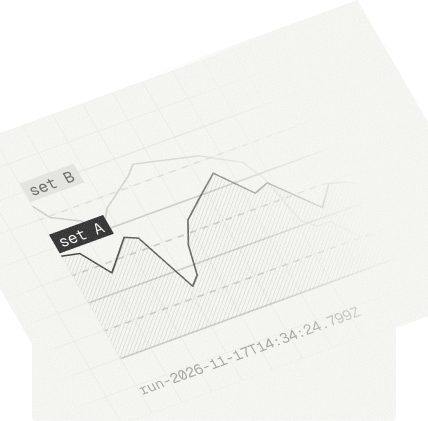

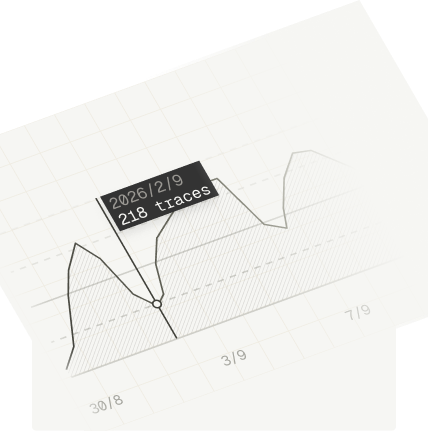

Gain deep visibility into your traces

Launch, observe, improve — repeat.

Langfuse helps you ship AI Agents/Products from prototype to production and beyond. Once in production we power your continous improvement loop using production data to make your agents and LLM applications ever more powerful.

The full LLM engineering loop

Langfuse brings observability, prompts, evals, experiments, and human annotation into one connected workflow — so you can move from prototype to production and keep improving with real usage data. Hover any part of the diagram to learn more.

All the tools, oneintegrated platform.

One integrated platform to trace, manage prompts, evaluate, and experiment from prototype to production scale.

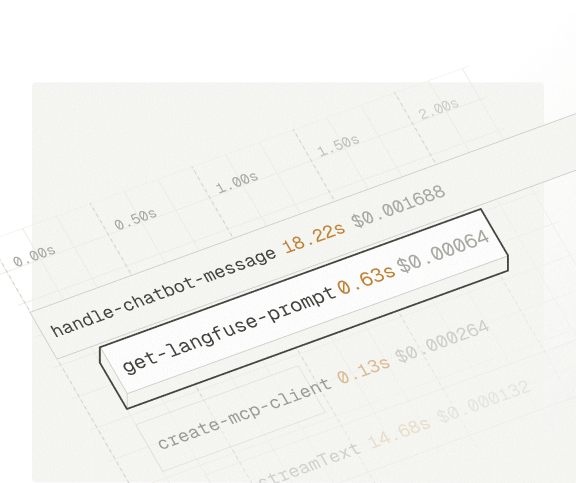

Observability

Hierarchical traces capture every LLM call, tool invocation, and retrieval step. Filter by user, session, cost, latency, or custom metadata.

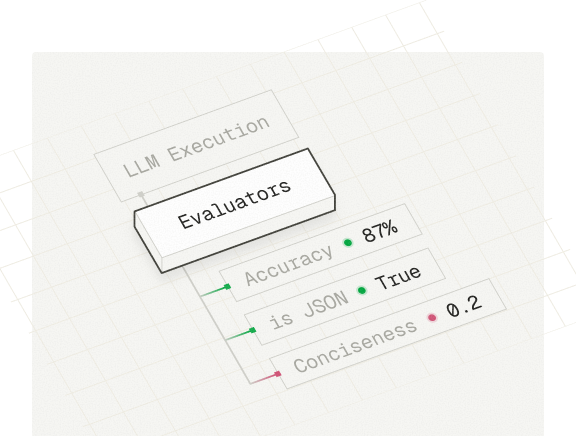

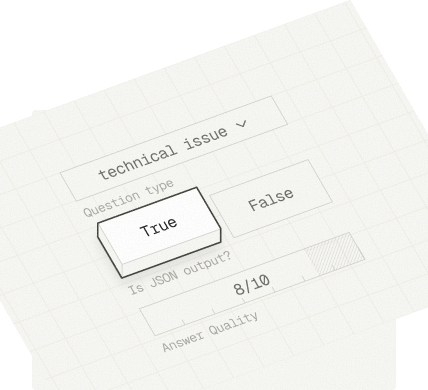

Evaluation

LLM-as-a-judge, heuristic functions, or human review. Run evaluators on production data or during experiments.

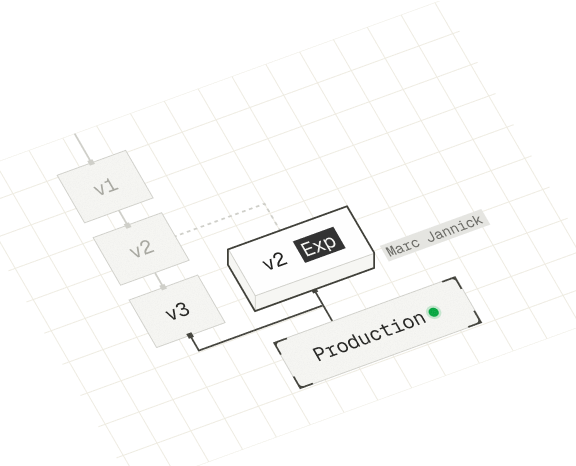

Prompt Management

Separate prompts from code with one-click deployments and rollbacks. Turn improving your production prompts a team sport.

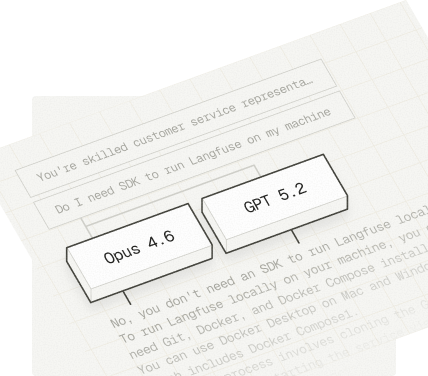

Playground

Test prompts on real production inputs and compare models side-by-side.

Experiments

Define test cases and run experiments. Compare results side by side.

Human Annotation

Collaborative Human-in the-Loop workflows to review traces and create golden datasets.

Cost & Latency

Monitor cost, latency, and quality with dashboards and automated alerts.

Canva's AI team relies on Langfuse to trace and debug their generative design features in production.

Works with any stack.

Langfuse works with any language and framework supporting OTel instrumentation. Additionally, 80+ integrations make getting started even easier. No framework lock-in.

Agent Frameworks

80+ more integrations

Open Platform. Open Source.

We are huge fans of open standards and data portability. Langfuse won't lock in your data, ever.

MIT License

APIs & Exports

Active OSS Community

Made for developers, loved by agents.

Langfuse works by default with your coding agents. Install our MCP servers and CLI to develop at the speed of thought. Let Claude Code, Cursor and Codex do the hard work.

A ready-made skill for your coding agent. Manage prompts, traces, and evals through natural language — no manual API calls needed.

Full API access from the terminal. Let coding agents manage Langfuse for you, or script your workflows in CI/CD.

Interact with your Langfuse data programmatically from your IDE. Manage prompts, query traces, and more.

Enterprise Scale and Security.

Traditional observability handles many small spans. LLM systems run differently. Every step carries rich, verbose I/O that legacy platforms can't handle at scale. Langfuse ingests and queries LLM traces reliably at enterprise scale while following strict compliance frameworks.

Architecture

Reliability at Scale

- 23M+ SDK installs/month

10+ billion observations processed per month

2300+ customers

99.9% uptime

Security & Compliance

Canva's AI team relies on Langfuse to trace and debug their generative design features in production.

Why use Langfuse?

Langfuse is the most widely adopted open-source LLM engineering platform. Developers who value open-source and control over their data build production grade agents and LLM applications with Langfuse.

Langfuse powers the entire development cycle from prototype to full scale production loads.

The full cycle

Langfuse powers the entire development cycle from prototype to full scale production loads.

Unified Platform

All components of Langfuse work great standalone but excel when used together.

Open Source (MIT)

Inspect the code. Self-host for free. We are the largest OSS community in our category.

OTel native

Standard trace format. Works with existing OpenTelemetry instrumentation.

80+ integrations

Works with any model, any framework, and stack.

Built for Scale

ClickHouse backend allows to query millions of traces in milliseconds.

Async by default

Tracing never blocks your application. Background processing, automatic batching.

Loved by Agents

CLI, MCP, accessible docs - Coding Agents love working with Langfuse.

Production-proven

Billions of events processed per month. 23M installs/month. Fortune 50 deployments.

Shipping Velocity

The AI space is changing fast. We understand what patterns matter and ship daily.

Get Started — Free tier: 50k observations/month. No credit card required.

Start improvingyour agents

in under 5 minutes.

Need help? — Talk to Sales·Reach out to Support

Questions & Answers

Langfuse is an open-source LLM engineering platform that helps teams build, monitor, and improve their AI applications. It covers the full development lifecycle with tracing, prompt management, evaluations, and analytics dashboards — all in one place. Langfuse is used by 2,300+ companies and processes billions of observations per month. You can try it instantly with the public demo project or sign up for free